Practice

6 min read

Why Tech Interviews Feel Harder Than the Actual Job

Synopsis

Tech interviews often feel harder than the job itself because they test speed, abstraction, and edge cases instead of real work. Here’s why the gap exists and how to handle it.

If you are a self-taught developer or someone breaking into tech, you have probably asked yourself why the interview feels harder than the actual work. You can design a feature, build a backend, or ship a product — yet you freeze up when asked to reverse a linked list in 20 minutes on a whiteboard. The frustration is not just psychological. It reflects a genuine structural gap between how companies hire and what the job actually requires.

This article explains where that gap comes from, what the research says about interview validity, and how candidates can navigate a system that is imperfect but unlikely to change quickly.

Key Insight

A major 2022 meta-analysis by Sackett et al., published in the Journal of Applied Psychology, found that technical interviews often measure performance under artificial constraints rather than real-world job competence. Structured interviews remain the strongest predictor of job performance — but most tech interviews are not well-structured.

Why the Interview and the Job Measure Different Things

The standard technical interview in software development was designed to filter candidates at scale. Algorithms, data structures, and live coding problems are easier to standardize and score than realistic work simulations. They also create a common bar across thousands of candidates applying for the same role.

The problem is that this bar does not accurately reflect what most developers do most of the time. In real-world development, you have access to documentation, colleagues, version history, and time to think. You debug, collaborate, and make tradeoffs. In a 45-minute interview, you are expected to recall obscure sorting algorithms from memory, write syntactically perfect code under observation, and explain your thought process simultaneously.

According to the Greenhouse 2024 State of Job Hunting report, 61% of job seekers have been ghosted after a job interview — a figure that reflects not just poor communication but how transactional and disconnected hiring has become from actual employment. The interview is increasingly a performance, not a preview of the work.

The disconnect affects candidates at every level. A 2025 analysis of tech hiring trends published by The Pragmatic Engineer found that interviewers at major companies like Google now routinely ask LeetCode-hard problems as a baseline, a shift from just a few years ago when medium-difficulty problems were the norm. The bar keeps rising regardless of whether it predicts job performance.

What Research Actually Says About Interview Validity

Industrial-organizational psychology has spent decades studying whether interviews predict job performance. The findings are instructive.

Sackett et al.'s 2022 landmark meta-analysis found that structured interviews — where all candidates are asked the same questions and scored consistently — are the strongest predictor of job performance among common hiring tools. Their mean operational validity was 0.42, higher than cognitive ability tests, personality assessments, and unstructured conversations.

The critical word there is structured. Most technical interviews are only partially structured. Coding challenges may be standardized, but behavioral rounds often vary by interviewer, questions change between candidates, and scoring is inconsistent. A 2025 meta-analysis published in the International Journal of Selection and Assessment confirmed that criterion-related validity varies substantially depending on what constructs the interview is designed to assess and how consistently it is evaluated.

In plain terms: interviews can work, but only when they are well-designed to measure skills that are actually relevant to the job. Asking a data engineer to implement a red-black tree from scratch tests something — but probably not data engineering.

Pro Tip

When preparing for tech interviews, identify the specific type of interview the company uses: algorithmic (LeetCode-style), system design, behavioral, or take-home project. Each requires different preparation. Most companies publish their interview format on Glassdoor, their engineering blog, or Levels.fyi. Understanding the format before you start preparing saves significant time.

Why Time Pressure Makes It Harder

One factor that separates interviews from real work is artificial time pressure. At work, most developers have days or weeks to work through a problem. Consultations with colleagues, access to Stack Overflow, and iterative testing are all normal. In an interview, you have 30 to 45 minutes, you are being observed, and there is no Googling.

This is not trivial. Cognitive performance under social evaluation pressure — a well-documented phenomenon in occupational psychology — meaningfully reduces the quality of problem-solving. Candidates who understand a concept thoroughly may still perform worse under observation than candidates who have practiced the interview format specifically.

The 2025 Society for Human Resource Management Talent Trends Report found that the top three reasons candidates withdraw from hiring processes are that their time is not respected, the process is too long, and expectations are unclear. The average time to hire across industries is 44 days, and in tech the timeline is often longer. Candidates spend weeks preparing for interviews that may be testing skills with limited relevance to the role they would actually perform.

Understanding this does not make the pressure disappear, but it reframes the challenge. You are not being asked to demonstrate your abilities as a developer. You are being asked to perform a specific kind of timed assessment that rewards a particular type of preparation.

What the Interview Is Actually Selecting For

It is worth being direct about what most tech interviews measure.

Algorithmic interviews primarily measure how well a candidate has practiced algorithmic interviews. Candidates who have spent 200 hours on LeetCode outperform candidates who have spent the same 200 hours building software. This is well understood inside the industry, which is why mock interview platforms and dedicated prep resources are a significant market.

This creates a real equity problem. Candidates with time to prepare intensively — typically those who are between jobs, in competitive university programs, or with the financial resources to take time off — have a structural advantage over self-taught developers, career changers, and those working full-time while applying. The interview system, in its current form, filters partly on preparation access rather than job readiness.

The Jobscan State of the Job Search 2025 found that candidates who include the exact job title on their resume are 10.6 times more likely to receive an interview invitation. The gatekeeping starts before the interview, and continues through every stage of a process that may or may not reflect actual job fit.

How to Prepare Without Losing the Plot

The most effective approach combines structured practice for the interview format with clear presentation of real-world work.

Practice the format deliberately. If you are applying to companies that use LeetCode-style interviews, that is the preparation you need. Two to three focused weeks on medium-difficulty problems, timed, is more effective than months of inconsistent effort. Platforms like NeetCode, LeetCode, and system design resources like the ByteByteGo blog are practical starting points. The goal is not to love the format — it is to become comfortable enough with it that the pressure decreases.

Prepare for behavioral rounds just as seriously. Interview preparation and knowing how to handle tough questions is its own skill. Behavioral interviews, when structured well, are actually strong predictors of performance. Prepare specific examples from your experience using the STAR format (situation, task, action, result), and connect them clearly to the competencies the role requires.

Make your real work visible. If you have built something — a side project, a SaaS tool, an open-source contribution, a client project — that evidence matters. Knowing what hiring managers actually notice in the first five minutes helps you prioritize what to showcase. Specific, quantified contributions to real products are more persuasive to strong hiring managers than algorithmic performance alone.

Target companies that align with your strengths. Not all tech companies use the same interview format. Many startups, mid-market companies, and non-FAANG employers use take-home projects, portfolio reviews, or structured technical conversations rather than whiteboard algorithms. If the LeetCode format is genuinely not where you perform well, it is worth identifying employers whose process matches how you actually work.

Hint

Before your next technical interview, spend 20 minutes reviewing what the company's engineering blog says about how their teams work. Understanding their actual tech stack, team structure, and product challenges gives you better material for both technical and behavioral questions — and signals genuine preparation to interviewers.

After the Interview

Post-interview silence is common, and understanding what it means matters. Waiting after an interview and knowing what to do is a real challenge — the Greenhouse 2024 report found that 61% of candidates are ghosted after interviews, a nine-point increase year over year.

If you do not hear back within the timeline given, a single polite follow-up is appropriate. If there is no timeline, one week is a reasonable window. If you receive a rejection, asking for brief feedback is worth doing. Most companies will not provide it, but some will, and specific technical feedback is genuinely useful for future preparation.

The interview process in tech remains imperfect. That is unlikely to change quickly. What you can control is how prepared you are for the format, how clearly your resume reflects your real abilities, and how strategically you choose which roles to pursue.

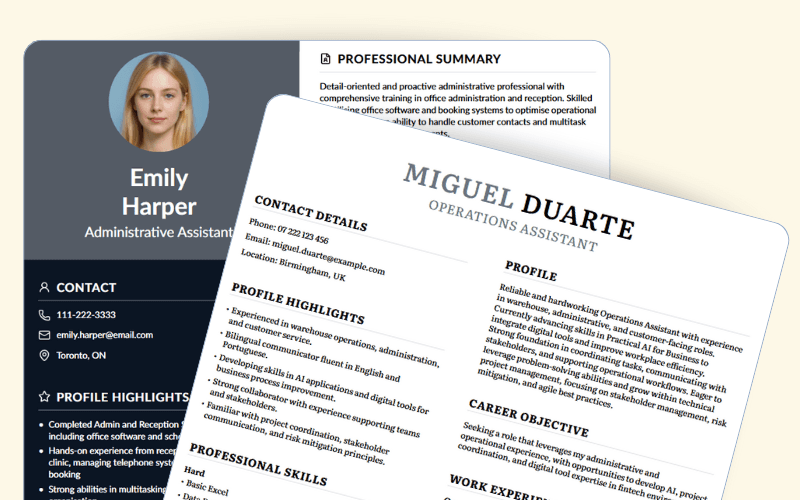

Yotru's resume builder helps candidates present their actual project experience and technical skills in a format that passes ATS screening and gives human reviewers a clear picture of what they have built. Getting through the initial filter is the first step — the interview is the second.

About the Author

Jenna Gallo

Business Development

Jenna Gallo

Business Development

Jenna leads business outreach at Yotru, connecting with partners and organizations to introduce the platform and build new opportunities.

Frequently Asked Questions

Because interviews test abstract problem-solving like algorithms and edge cases, while real jobs focus more on debugging, collaboration, and shipping features.

Continue Reading

More insights from our research team

AI in Career Services: Practical Use Cases That Don't Replace Advisors

AI in career services isn't about replacing advisors. It's about handling baseline tasks so career professionals can focus on coaching and relationship building.

7 min read

Why Young People Struggle to Get Hired (and What Employers Really Look For)

Students aren’t failing the job market because they lack skills, but because they struggle to translate them. Sandra Davis explains how language, behaviour, and structured thinking separate candidates who get noticed from those who don’t, and what employers actually look for beyond grades.

5 min read

Is AI Pushing Gen Z Toward Blue-Collar Careers? What the Data Actually Shows

AI is changing how Gen Z thinks about work, but it’s not a simple shift to blue-collar jobs. Interest in trades is rising due to automation fears, but the reality is more nuanced.

6 min read

Layoff Checklist for Employers: Legal and Compliance Essentials

This layoff checklist for employers provides a systematic framework for ensuring legal compliance, fair selection processes, and appropriate employee support throughout workforce reductions.

6 min read